Breaking Inertia, Embracing Change: The Most Important Thing for 2026 and Beyond

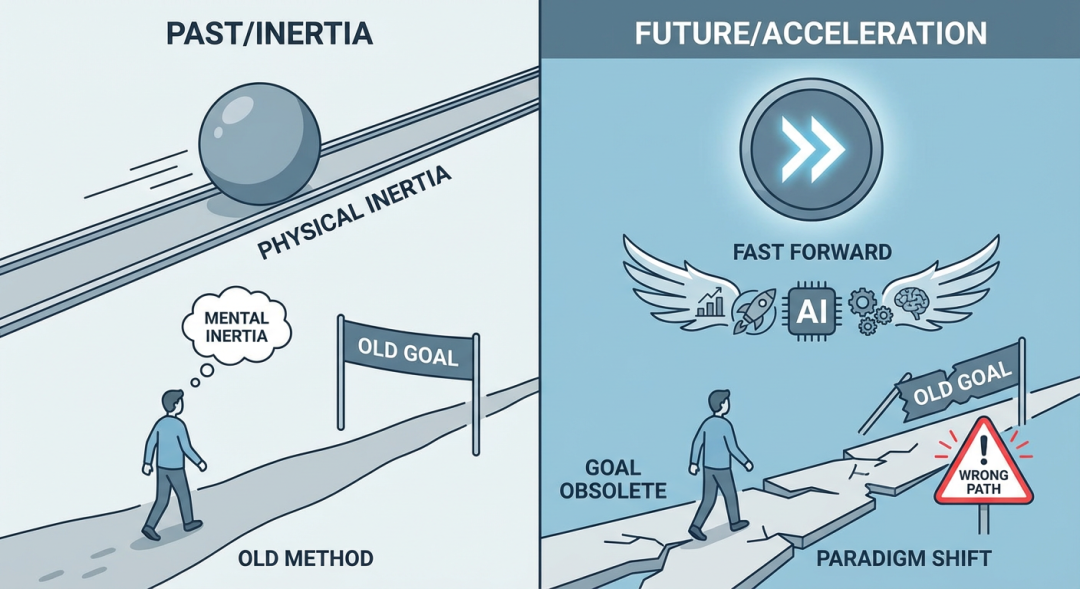

Physical inertia keeps objects in their current state of motion, while human inertia keeps us inclined to pursue our existing goals using our existing methods.

Unfortunately, the coming era is likely to be the most dramatically changing period in human history. AI is about to give wings to every industry, amplifying individual capabilities infinitely. The world seems to have hit the fast-forward button. In this context, the boundary conditions for "what's right" are rapidly shifting—a meaningful goal today might become meaningless a month later. Paradigms are evolving at unprecedented speed—paradigm shifts that used to take years can now happen in months or even weeks. In the past, inertia had minimal impact because paradigm changes were slow; today, the greater our inertia, the further we might slide down the wrong path.

The Intensity and Speed of Change Are Unprecedented

This might sound too abstract. As someone in computer science, let me use software engineering as an example to illustrate just how dramatic these changes are. Software engineering (in the narrow sense) is essentially using scientific methods to build software well—correctly, economically, and maintainably.

Over the past few decades, software engineering practices have undergone several revolutions, but mainly to adapt to new infrastructure (like microservices architecture for cloud computing) or new development rhythms (like agile development for rapid internet iteration). The fundamental principles haven't changed much—if in 2022 you picked up a twenty-year-old software engineering textbook, you'd be mostly fine.

But in the past two years, especially 2025, I feel like I'm standing on a high-speed conveyor belt—the ground beneath my feet keeps accelerating, and a moment's inattention could throw me off.

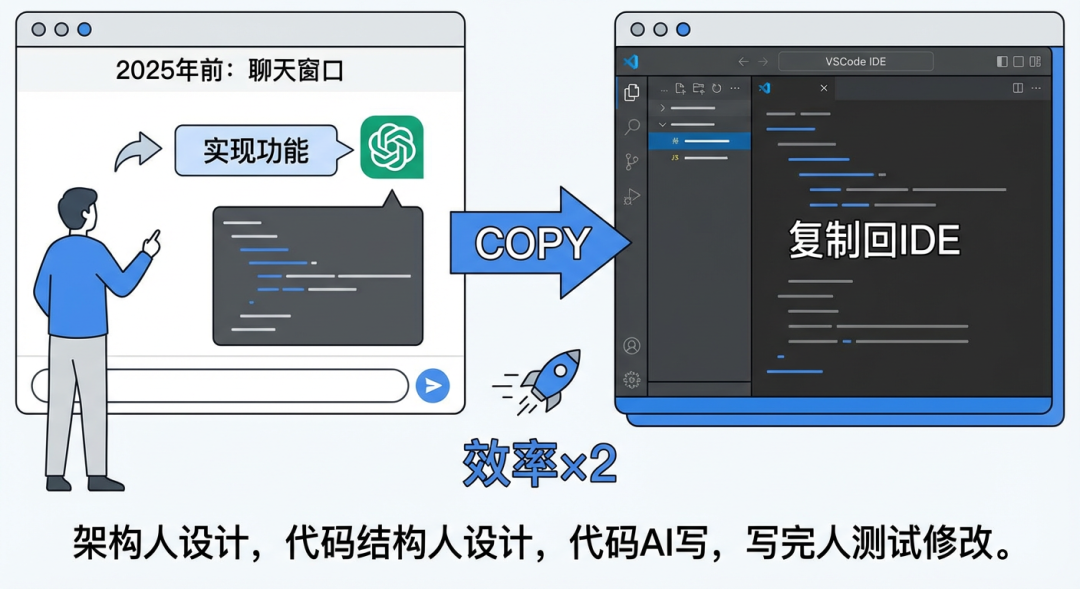

Before 2025: Write Code in Chat Windows, Copy to IDE, 2× Development Efficiency

We'd ask ChatGPT to implement a feature, then copy the code back to VSCode. Development speed roughly doubled, but nothing fundamentally changed—humans did architecture, humans designed code structure, AI wrote code, humans tested and fixed it.

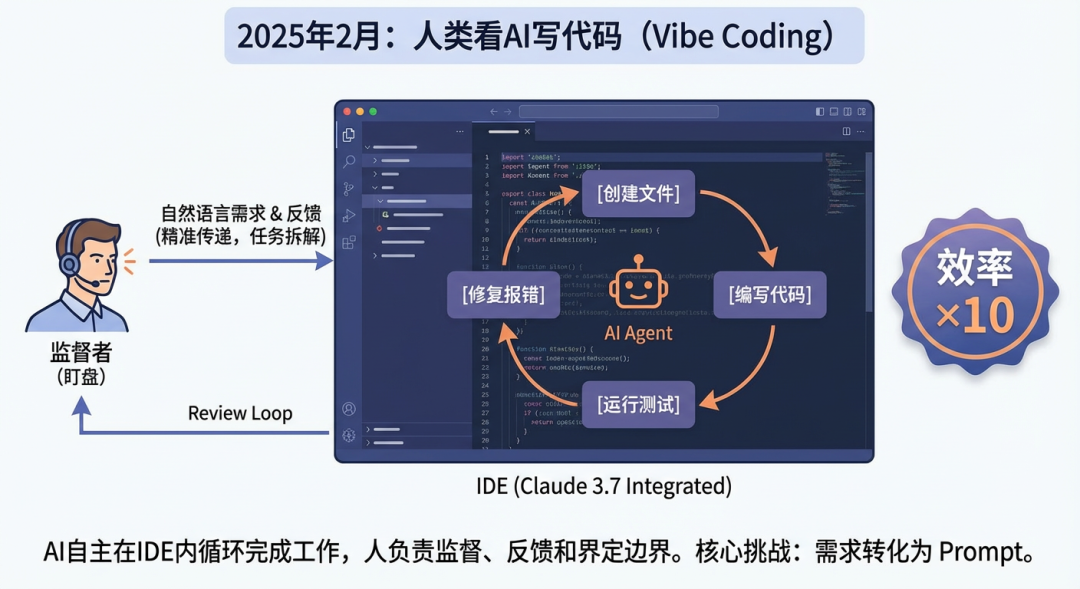

February 2025: Humans Watch AI Write Code, 10× Development Efficiency

Claude 3.7 was released, with significant improvements in agentic programming capabilities—for the first time, AI could autonomously create files, write code, run tests, and fix errors in the IDE, rather than just outputting code snippets in a chat window. This made Vibe Coding truly viable. We'd describe requirements in natural language, then supervise the AI's writing process like watching a stock ticker, reviewing whether the code was correct, and providing feedback for corrections. The core challenge at this stage was no longer the technology itself, but how to precisely convey requirements to the model and decompose tasks according to the model's capability boundaries. I once wrote an article "How to Make Vibe Coding Actually Work" introducing the workflow under this paradigm.

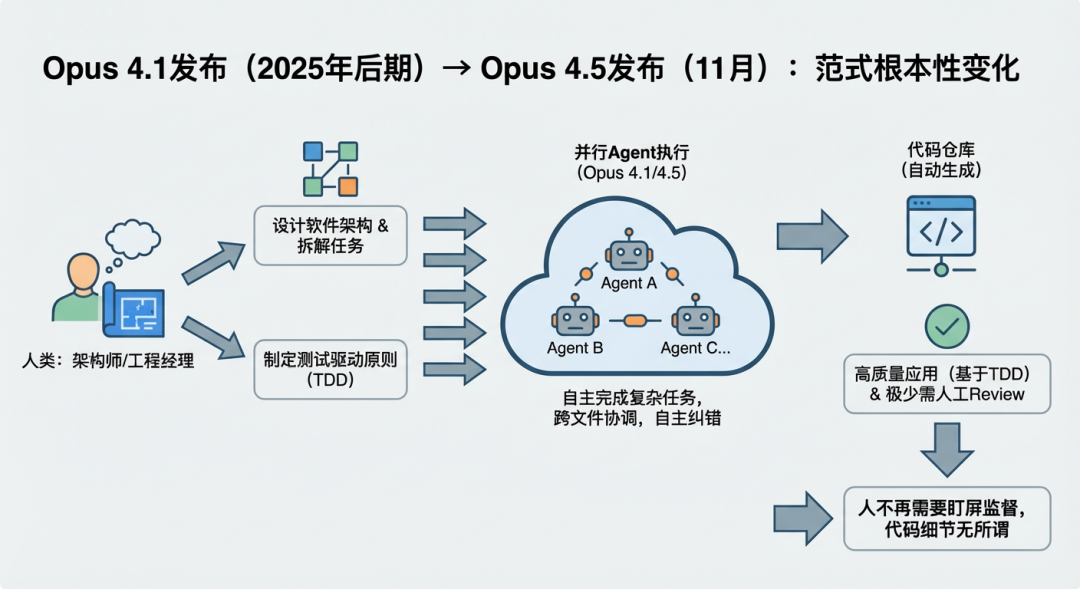

August 2025: Humans Decompose Tasks, AI Develops in Parallel, 50× Development Efficiency

Opus 4.1 was released, with significant improvements in context understanding and cross-file coordination, plus more reliable self-correction capabilities, enabling it to independently complete complex tasks with high accuracy without frequent human intervention. This brought a fundamental paradigm shift—humans no longer needed to monitor the screen. We played the role of architects and engineering managers, designing software architecture, decomposing tasks into parallelizable subtasks, and launching multiple Agents for parallel programming. By applying test-driven development principles, code quality and functional correctness could be reasonably guaranteed. You could glance at the code, but it was no longer necessary—what exactly was happening in the code didn't really matter anymore. This change became increasingly recognized after Opus 4.5 was released in November.

February 2026: Humans Describe Requirements, AI Develops End-to-End, ???× Development Efficiency

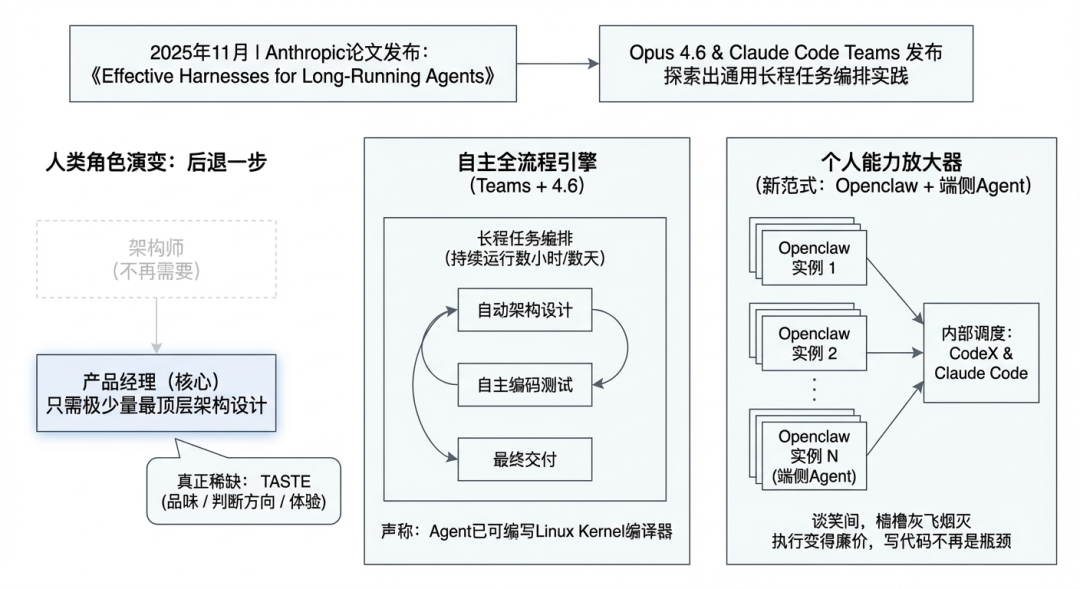

Opus 4.6 and Claude Code Teams were released, establishing fairly universal practices for long-running task orchestration—Agents can now run continuously for hours or even days, autonomously completing the entire process from architecture design to coding and testing. This means even the architect role is no longer needed; for large projects, we only need to be product managers. This paradigm was foreshadowed in Anthropic's November 2025 article "Effective Harnesses for Long-Running Agents." When 4.6 was released, Anthropic claimed that using Teams + 4.6, an Agent could write a compiler capable of compiling the Linux Kernel. Humans have taken another step back, only needing to participate in minimal top-level architectural design to complete large software development.

Combined with the emergence of new paradigms like Openclaw for edge-side Agents, individual capability amplification has reached unprecedented levels. The most aggressive experts I know routinely run a dozen Openclaw instances simultaneously, with each instance orchestrating CodeX and Claude Code internally—laughing while warships turn to ash. When execution becomes this cheap and writing code itself is no longer the bottleneck, what's truly scarce emerges: taste—knowing what's worth doing, what direction is right, what experience is good.

The Danger of Inertia Is Obvious

All these changes didn't happen over a decade—they happened in less than a year. If you're still doing things the way you've always done them, you might not realize that the things themselves are becoming unimportant. In terms of efficiency, those who have mastered the new paradigms are already ten or even a hundred times more efficient than you. This gap cannot be bridged through hard work alone, because it's not a gap in speed—it's a gap in dimensions.

Stay vigilant: Are the goals I'm pursuing still meaningful? Are the methods I'm using still effective? The world has changed—have I? This might be the most important question for each of us in 2026 and beyond.

AI will reshape almost all knowledge work.

The Future Is Here—Face It with Courage

At this point, some might feel uneasy. I do too, sometimes.

Such dramatic and rapid change means the future is becoming increasingly unpredictable. We humans instinctively fear such uncertainty.

But I want to look at this from a different angle. I believe each of us has things we truly want to accomplish. And the rapid development of AI essentially makes these things easier to achieve.

Take myself as an example: In security, I've always wanted to create universal vulnerability discovery tools. Technically, I pursue the ability to handle anything—successfully developing whatever I want to develop, successfully reverse engineering whatever I want to reverse engineer. In terms of general capabilities, I hope to have unique taste and grasp of fundamental principles for getting things done. Spiritually, I want to thoroughly study Confucianism, Buddhism, Taoism, Western philosophy, and scientific thought to grasp the Dao. The emergence of AI has made many of these aspirations more achievable than before.

Of course, we shouldn't be overly optimistic either. Throughout history, every productivity leap has ultimately led to mostly positive outcomes, but the process often involves tremendous pain—ordinary people frequently become casualties of transition, and pioneers who take one wrong step become martyrs. Add to this signs that the world order established since 1945 is showing cracks, with great powers striving to rebalance peacefully—where the future leads, no one can say for certain.

But the future is here, and all we can do is face it with courage. In the new year, I wish everyone better dances with AI.